Look Ma, No RAG! Building Agent-Native Knowledge Systems

I built this over three months. Then Karpathy posted about the same pattern. Here's the systematized, repeatable version - born from downloading papers, organizing them, and realizing the index file was the whole trick.

TL;DR - At project scale, knowledge isn't primarily a search problem - it's a navigation problem. You don't need vector databases or embeddings. Index files as routing tables, store content as structured markdown, and let the agent follow links in 2-3 hops to grounded answers. This is a pattern for project-scale knowledge systems - not internet-scale retrieval.

Clone the repo, run one slash command, seed it with your files - working knowledge base in five minutes. Skip to "Try It" if you want to start there.

In January, I started downloading research papers. No grand plan - I just needed to understand the field. What does the academic literature say about autonomous coding agents? What are companies actually doing? Which blogs and papers are worth reading?

I'd search with ChatGPT or Claude to find relevant work, download the PDFs, pull the LaTeX source from arXiv, and save everything as markdown files that Claude Code could work with. I wrote scripts to automate the arXiv pipeline - fetch the LaTeX, convert to markdown, strip the boilerplate - because doing it by hand for every paper wasn't sustainable. Not everything had LaTeX source available, so I also needed scripts to extract text from PDFs directly. After a few weeks I had dozens of papers and blog posts sitting in a directory. I could find things if I remembered where I'd put them, but the collection was growing faster than my ability to navigate it.

So I started organizing. First just directories - papers in one place, blog posts in another. Then summaries, because reading a 30-page paper every time I needed one fact wasn't working. Then I noticed I kept asking the same kinds of questions across papers, so I built an index file that listed each summary with a one-line description of when you'd want to read it.

That index file turned out to be the key insight. When I pointed Claude Code at the directory and asked a question, it would read the index, pick the right summary, and give me a grounded answer. Grounded in the actual papers I'd collected, not just the model's parametric memory. Typically three file reads, no vector database, no embedding pipeline. Just structured markdown and an agent that could follow a routing table.

From there, I needed the same thing for a code-agent KB - cheatsheets, property change tables, routing tables that got the agent to the right file in three hops. Then a strategic synthesis KB across 92 conversations about architecture, methodology, and design decisions. By the third one, patterns had crystallized: two types of knowledge bases, routing tables instead of embeddings, federation across projects, different ingestion workflows for different kinds of source material.

I later found that projects like OpenScholar (Allen AI's system for answering scientific questions across 45 million papers) and FutureHouse (Eric Schmidt's research lab behind PaperQA2) had been doing something similar at massive scale - full RAG with embedding vectors and dedicated retrievers. But at the scale of a personal or project knowledge base - hundreds of files, not millions - you don't need that infrastructure. Karpathy arrived at the same conclusion: agentic CLIs with file exploration handle this scale well.

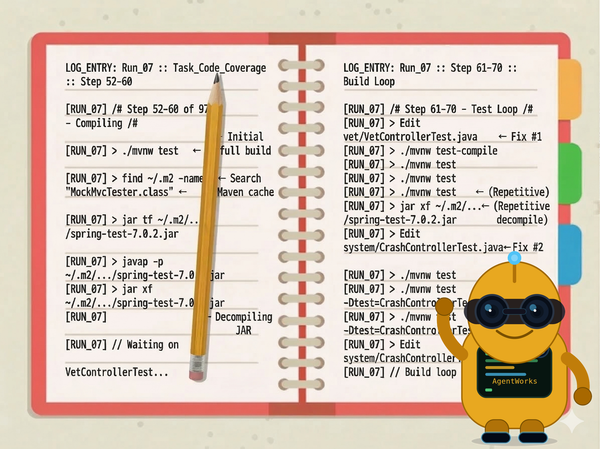

Starting in February, I formalized the work into a methodology. The git log tells the story:

- Phases, templates, the discovery-then-execution split

- Knowledge Base Architecture - two KB types, the librarian layer, federation. This came after building the first few KBs - the theory followed the practice

- Steward variant for ongoing project maintenance. Hierarchical status reporting. The methodology now covers projects that don't end

- KB navigation infrastructure -

CLAUDE.mdas a session bridge, routing tables at every level - Three new slash commands:

/forge-eval-agent,/forge-kb,/forge-project. The methodology becomes executable - Curator intake protocol and reference harvest guide - how new material enters a KB without breaking the structure

- AGENTS.md and PLANS.md convergence research. The industry is starting to converge on similar patterns

- Eval-agent hardening: sweep conventions, domain-specific stopping conditions. The methodology gets opinionated about experiment design

28 commits over two months. Each one driven by a real project that needed something the methodology didn't have yet.

The companion story is how the research partner itself evolved. It started with ChatGPT conversations - dozens of them, many recorded using ChatGPT's voice mode while walking in the park. D-1 through D-60, each one a working session about agent architecture, experiment design, research methodology. I exported them as markdown files and dumped them into a repo. Then more conversations: an E-series picking up where D left off, an R-series for external references, an F-series for ongoing research threads, an H-series for ecosystem analysis.

By March, the research KB had 83 commits, 92+ synthesized conversations, a seven-theme analysis framework, cross-cutting insights, action items, and journal entries tracking daily decisions. It was ingesting status reports from a dozen satellite projects. It could answer questions like "what did we decide about judge architecture in late February?" with citations to specific conversations.

The methodology and the research partner evolved together. Every time I needed a new capability - federation, ingestion protocols, health checks - I'd build it for the research KB first, then formalize it in the methodology. Practice led. Theory followed.

Then Karpathy Posted

Then last week, Andrej Karpathy posted about using LLMs to build personal knowledge bases. The post crossed a million views and spawned a gist that became an "idea file" for the pattern. The whole thing resonated, but one line hit hardest:

"I think there is room here for an incredible new product instead of a hacky collection of scripts."

That's what I've been building toward with Agento Studio - a methodology and toolkit for building, testing, and improving AI agent systems. The knowledge layer is the first piece I'm writing about. I've been using it across a dozen projects, and it ships with slash commands for scaffolding knowledge bases, eval-agent projects, software projects, and ongoing stewardship. The other pieces get their own posts.

The Core Idea

At project scale - hundreds of files, not millions - knowledge isn't primarily a search problem. It becomes a navigation problem. The underlying insight is one that Karpathy's post articulated perfectly: LLMs are remarkably good at navigating structured markdown. Given a routing table with "Read when..." conditions, the model reliably selects the correct file without search. I've been calling this "JIT Context" - to be precise, this is retrieval, but without embeddings, vector databases, or offline indexing pipelines - using file-system navigation instead. The agent retrieves context just in time, progressively discovering what it needs by reading an index, following a routing table to the right file, then reading the detail. Each hop narrows the scope. It's built on the file exploration capabilities that agentic CLIs already have - read, grep, list - just pointed at structured content instead of source code.

The workflow follows naturally: ingest raw material, compile it into structured knowledge, run Q&A against it, use health checks to improve it over time. The knowledge base is a living artifact, not a static dump.

Where Agento Studio picks up is in systematizing that pattern - making it repeatable across projects, typed by use case, and connected across knowledge boundaries.

What Agento Studio Does

Two Types of Knowledge Base

I'm building agents for software development. But to build good agents, I needed a good research partner - an AI I could have ongoing conversations with about the field, my projects, my decisions. That research partner needed a knowledge base: broad, synthesized across hundreds of sources, organized by themes, able to answer "what have we decided about X across the last three months?" I interact with it through Claude Code - just chat, backed by structured knowledge. I've been thinking about other interfaces, but conversation in the terminal has been working surprisingly well.

The software projects themselves need a different kind of knowledge base. Less broad, more deep. Focused on the specific domain the software addresses - API change tables, framework configuration, version-specific gotchas. An agent working on a migration task doesn't need to know about my research methodology. It needs to find the right cheatsheet in three hops.

Agento Studio formalizes this into two types:

Research-Partner KB - broad, question-driven synthesis for a human-AI research pair. Theme-based routing, conversation synthesis across dozens of sources, cross-cutting analysis. Built for strategic thinking and ongoing inquiry.

Code-Agent KB - narrow, task-driven lookup consumed by agents during execution. Every file gets YAML frontmatter:

---

task_types: [migration]

artifact_type: cheatsheet

subjects: [spring-boot-3, javax-to-jakarta]

see_also: [property-changes.md]

---

Task type, artifact kind, subject tags - drawn from a controlled vocabulary. A simple grep across frontmatter finds every migration-related file, or every decision matrix, or everything tagged with a specific framework version. The same metadata powers the health checks: if a file uses a subject tag that isn't in the vocabulary, or a see_also reference that doesn't resolve, the validation catches it.

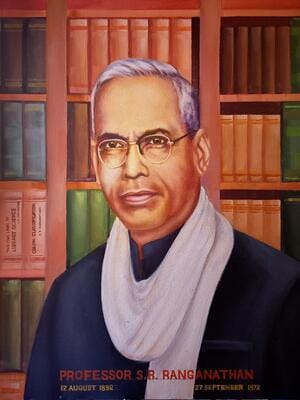

A Flat-File Graph Database (or: What Librarians Knew in 1933)

The frontmatter design didn't come from database theory. It came from library science.

In 1933, S.R. Ranganathan published his Colon Classification system in India - the first faceted classification. His insight: don't force knowledge into one hierarchy. A book about "the history of rice cultivation in Japan" shouldn't have to choose between History, Agriculture, and Japan. Instead, classify it along independent dimensions - any patron can find it from their own angle.

That's exactly the problem with agent knowledge bases. A file about security API changes is needed by an agent doing migration work and an agent doing a security review. If you organize by task, the security reviewer can't find it. If you organize by topic, the migrator can't find it. Ranganathan's answer: classify along independent axes and let each searcher query from their own dimension.

The Code-Agent KB uses three facet axes in YAML frontmatter:

---

task_types: [migration, review] # What task brings you here?

artifact_type: remediation-guide # What kind of document is this?

subjects: [spring-security, http-clients] # What domain topics does it cover?

related:

see_also:

- java/type-changes.md # Cross-domain link

broader: [spring/boot-2-to-3/index.md] # Parent in the hierarchy

---

The task_types, artifact_type, and subjects are drawn from a controlled vocabulary - a single file that enumerates every allowed value, like the Library of Congress Subject Headings but for your specific domain. An agent doing migration work greps for task_types:.*migration. An agent doing review work greps for task_types:.*review. Same file, different access path.

The see_also and broader fields are where it becomes a graph. Every cross-reference is a directed edge. Every broader link points to the parent index.md. The result is a navigable graph - nodes are files, edges are references - with effectively zero additional infrastructure. No Neo4j, no triple store. Just YAML fields in markdown files, traversable with grep and read.

The routing tables in each index.md file borrow from another library science concept: the reference interview. When you walk into a library and say "I need something about security," the librarian doesn't hand you the card catalog. They ask: "Are you migrating an application, or auditing an existing one?" Then they point you to the right shelf. The "Read when..." column in a routing table does the same thing - it encodes the reference librarian's expertise about who needs what and why.

| File | Read when... |

|------|-------------|

| `security-changes.md` | Migrating Spring Security configuration |

| `property-changes.md` | Updating deprecated properties |

Three hops: root index → domain index → detail file. The same navigation depth a good library achieves: reference desk → section → shelf.

Agent-Native Routing

The CLAUDE.md file in each project isn't documentation - it's a prescriptive session bridge. It tells the agent how to operate:

- Which files to read first, which to skip

- What questions this KB can answer and what it can't - negative knowledge prevents wasted search

- The exact navigation algorithm: read index → follow routing → read detail → report gaps

This is the difference between a knowledge base that contains information and one that tells the agent how to navigate it.

Federation

A single KB is useful. Multiple KBs that know about each other are more useful.

A KB-FEDERATION.md catalogs all KBs in your ecosystem with "Read when..." routing:

| KB | Entry Point | Read when... |

|----|------------|--------------|

| domain-knowledge | index.md | Task involves code changes |

| research-corpus | CLAUDE.md | Question involves academic patterns |

| strategic-synthesis | THEME-INDEX.md | Question involves decisions |

An agent that can't find an answer locally checks the federation and knows where to look next. No centralized search - just routing tables all the way down. I run six federated KBs across my projects, and an agent in any one of them can navigate to the others.

Health Checks

Knowledge bases rot. Links break, routing tables go stale, vocabulary drifts, summaries diverge from source documents. Agento Studio includes a /kb-reindex command that runs seven checks across all federated KBs:

- Cross-reference integrity - every

see_alsotarget resolves to an actual file - Vocabulary compliance - subject tags match the controlled vocabulary

- Orphan detection - files that exist but aren't referenced by any index

- Index freshness - routing tables that don't cover all files in their directory

- Bidirectional links - for peer relationships, if A references B, B should reference A

- Concept coverage - defined terms and taxonomies appear in the glossary

- Summary-source consistency - numbers and taxonomy names in summaries match the source docs

There's also a link verification script that checks every path in KB-FEDERATION.md and every routing table across projects. It runs in seconds, catches drift before it compounds, and distinguishes real breakage from false positives in prose references.

The real validation came from a different test. I wrote 25 questions against one of my research KBs - questions I knew the answers to - and had the AI answer them cold. The answers were good. Then I cloned the entire knowledge base to a different machine, pointed a fresh Claude Code session at it, and ran the same 25 questions. Comparably good answers. No history, no prior context, no warm-up - just the structured knowledge base and an agent that had never seen it before.

It felt like that scene in The Matrix where Trinity downloads the helicopter pilot program. "Can you fly that thing?" "Not yet." Ten seconds later, she can.

That's the difference between knowledge transfer and model recall. The agent doesn't need to have been there for the conversations or the research. It just needs the routing tables and the structured content, and it can operate as if it had been. You're a git clone away from sharing everything your agent knows.

What 339 Files Actually Looks Like

The 25-question test was on one knowledge base. Across all my federated KBs, the current count is 1,772 markdown files. That sounds unmanageable. It isn't - because not all files serve the same role.

Here's the research-partner KB that synthesizes 92+ conversations, broken down by what the files actually are:

| Category | Files | How they're navigated |

|---|---|---|

| Conversation transcripts | 149 | Bulk archive - accessed via CONVERSATION-INDEX by D/E/F/H-series ID, not routing tables |

| Synthesis output | 42 | Theme docs, action items, cross-cutting analysis - the distilled product of those 149 conversations |

| Plans, briefs & learnings | 67 | Actionable work - project briefs, Forge methodology tracking, queued tasks |

| Cross-project research | 23 | Outbound queries and responses for satellite projects |

| Journal entries | 20 | Daily session notes - chronological, read by date |

| Analysis deep-dives | 17 | One-off investigations (Markov chains, TUI frameworks, agent behavior patterns) |

| Experiment protocols | 7 | Structured session logs from rehydration and setup experiments |

| KB infrastructure | 10 | CLAUDE.md, KB-FEDERATION, KEY-CONCEPTS, VOCABULARY - the routing layer itself |

| Supporting & misc | 4 | Templates, prompts |

| Total | 339 |

The routing table in CLAUDE.md - the 47-row navigation table that tells the agent where to find anything - manages roughly 190 of these files. The conversation transcripts are 44% of the file count but are bulk-archived with their own separate index. They don't bloat the routing surface at all.

Across the federated KBs:

| KB | Files | What it contains |

|---|---|---|

| Experiment driver | 530 | Eval framework, sweep configs, variant results |

| Research synthesis | 339 | Conversations, themes, strategy, journal |

| Spring ecosystem data | 279 | Framework reference data, property tables, compatibility matrices |

| Academic research corpus | 221 | Paper summaries, blog analysis, citation tracking |

| Code-agent domain knowledge | 210 | Domain-specific cheatsheets, decision tables, routing tables |

| Agent CLI project | 97 | Design docs, roadmap, implementation notes |

| Methodology | 68 | Templates, concept docs, guides |

| Judge research | 28 | Judge framework analysis |

| Total | 1,772 |

The interesting question is: does this scale? Each root index.md has a practical budget of about 100 lines. At a couple hundred files, routing tables fit comfortably. At 300-400, the root index hits its budget and you need hierarchical delegation - "for Spring questions, see spring/index.md." The experiment-driver KB is already at 530 files and working fine, though that's observation, not a controlled experiment.

There's a designed-in fallback. The code-agent KB tags every file with YAML frontmatter - task_types, subjects, artifact_type - drawn from a controlled vocabulary. A simple grep across frontmatter can find every migration-related file, or every decision matrix, or everything tagged with a specific framework version. Claude Code already uses grep as a fallback when routing tables don't surface the right file. At some file count, grep-over-frontmatter likely overtakes curated routing tables as the primary navigation strategy. Finding that crossover point is exactly the kind of experiment the eval-agent methodology was built for - same KB, same questions, controlled comparison across file counts.

Codified Ingestion

New material arrives constantly - conversations, papers, status reports, raw research. Agento Studio codifies how each type gets processed:

- Conversations → synthesis pipeline (summarize → extract themes → cross-cutting analysis)

- Papers → curator intake (classify, tag with frontmatter, update routing tables)

- Status reports → incremental theme updates (map to existing themes, update action items)

The methodology describes when to do a full re-synthesis vs. an incremental append, and tracks provenance so you can trace any conclusion back to its source conversation or document.

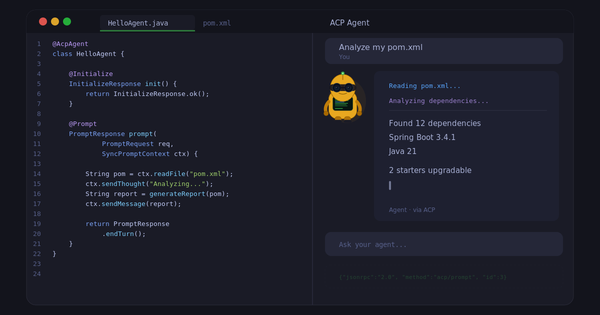

Slash Commands, Not Scripts

Every Agento Studio operation is a Claude Code slash command. /forge-research bootstraps a general research project. /forge-research-kb creates a federated research-partner knowledge base - the kind described in this post.

/forge-project - scaffold a software project

/forge-research - bootstrap a research project

/forge-kb - structure a corpus for Q&A

/forge-research-kb - bootstrap a federated research-partner KB

/forge-eval-agent - set up agent evaluation with judges

/forge-steward - bootstrap ongoing project stewardship

/collect-status - produce a timestamped status report

/ingest-status - absorb a project's status report into the KB

/kb-reindex - run health checks across all federated KBs

Clone the repo, open Claude Code, type /forge-kb ~/my-docs - you're structuring a knowledge base in five minutes. No config files, no path setup. The commands pull templates and concept docs from the same repo.

Working from a different project? Launch with claude --add-dir ~/agento-studio and the commands are available alongside your project's own context.

Beyond Knowledge Bases

The knowledge layer is one piece of a larger system. Agento Studio also ships with project variants for agent evaluation, software development, research, and ongoing stewardship - each with its own feedback loops and project structure. Those get their own posts.

Try It

git clone https://github.com/markpollack/agento-studio.git ~/agento-studio

cd ~/agento-studio

claude

# then: /forge-research-kb ~/my-research-kb "your topic here"

The getting-started guide walks through building your first research-partner KB end-to-end - from cloning the repo to querying across paper summaries. Five seed papers, 20 minutes, a working research agent at the end.

The README has the full command reference. The documentation covers the methodology, getting started, and all project variants - 13 concept docs, 11 templates, 10 slash commands, and 4 project variants.

If you're at Spring I/O Barcelona next week, I'm running a hands-on workshop on this. Come build a knowledge base for your agent and see the difference it makes.